Stop Fighting Pipelines and Start Trusting Data

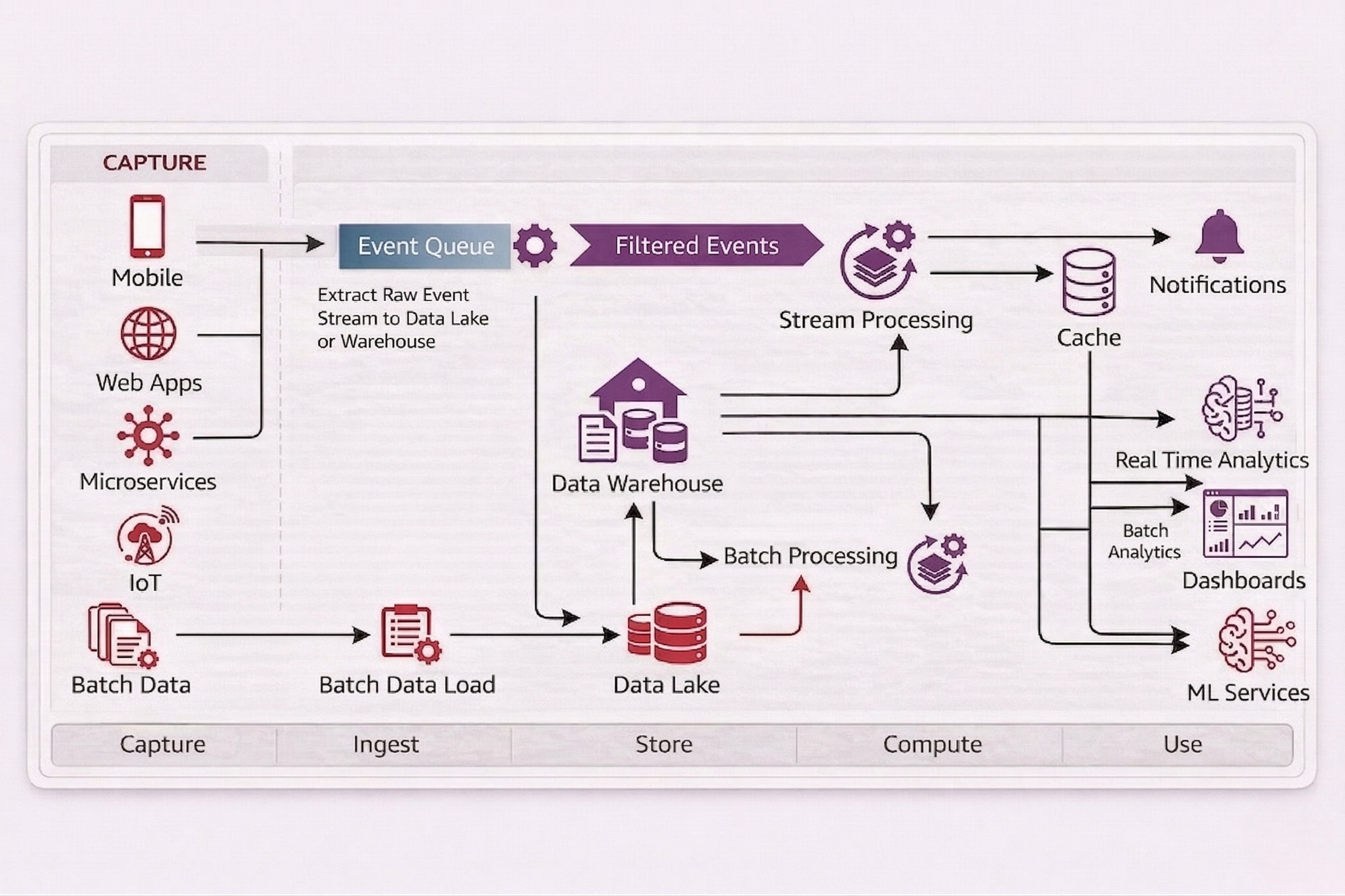

Design ingestion and transformation pipelines with SLAs, lineage, and monitoring built in.

When “It Ran Yesterday” Is the Biggest Data Risk in the Business

Most data pipeline setups fail under real pressure:

Fragmentation

Jobs live in scattered scripts and tools. One schema change breaks a month-end load. Decisions slip.

Silos

Each team builds its own flows. Metrics drift. Sales, finance, and operations argue instead of acting.

Key Person Risk

Manual runs and ad-hoc exports fill the gaps. People become the control plane. Error risk surges.

Inconsistency

AI and analytics teams pull from different versions of the truth. Models look impressive but don’t match the ledger.

No Accountability

No clear ownership exists for pipelines, SLAs, or incident response. Outages repeat.

The Result:

Higher cloud and labor cost, slower reporting, and material risk when results reach investors, lenders, or auditors.

Data Pipelines as an Operating Asset

A disciplined pipeline layer changes your economics:

Reduce manual effort

Automated, monitored flows replace manual extracts and reconciliations. Teams reclaim time for analysis.

Cut decision latency

Data lands on predictable schedules. Leadership runs reviews days earlier.

Lower incident risk

Tests and lineage reduce bad-data incidents in board decks and regulatory filings.

Control platform cost

Efficient jobs and storage patterns avoid runaway warehouse and compute bills.

Enable AI and BI

Clean, consistent feeds give BI, analytics, and ML a stable foundation.

"Ignoring the pipeline layer keeps you dependent on spreadsheets, hero engineers, and fragile timing."

Key Tech Partners

The Team Behind Robust Data Pipelines

Data Architects

own the end-to-end design, patterns, and governance standards.

Senior Data Engineers

implement ingestion, transformation, and orchestration logic.

Analytics Engineers

define semantic models and KPI layers that business teams actually use.

Ops/Platform Engineers

monitor performance, cost, and failures; maintain SLAs.

Where Pipeline Engineering Pays Off Quickly

SaaS / Subscription Business

Revenue, churn, and product usage data live in separate systems. Month-end numbers differ by team.

Pipelines from billing, product, and CRM into a unified subscription model with governed metrics.

One version of MRR, ARR, and churn. Forecast prep time drops. Board packs become repeatable.

Ecommerce and Retail

Orders, returns, marketing, and inventory feeds are stitched manually before every review. Latency and errors persist.

Event and transaction pipelines from ecommerce, marketplaces, POS, ERP, and ads into a single warehouse.

Reliable daily trade views. Less manual work. Faster stock, pricing, and marketing decisions.

Manufacturing and Operations

Production, quality, and maintenance data sit in plant systems and spreadsheets. No unified view of performance.

Pipelines from MES, ERP, quality, and CMMS into a standardized operations model.

Shift, line, and plant metrics update automatically. Root cause work starts from data, not anecdotes.

Pipelines With Tests, Lineage, and a Kill Switch

Automated testing

- Column-level checks for ranges, nulls, and referential integrity.

- Business-rule tests for key metrics.

Lineage and impact analysis

- Trace every metric back to its source.

- Assess impact before changing schemas or logic.

Monitoring and alerting

- Track job success, duration, volume, and freshness.

- Alert engineers before business users see issues.

Documentation and runbooks

- clear, versioned documentation for pipelines and datasets.

- Provide runbooks for failure scenarios and rollback procedures.

Business effect: Lower risk of bad data in critical reports, faster recovery, and easier audits.

From Ad-Hoc Jobs to an Operated Data Pipeline Platform

Phase 1 – Stabilize and Audit

- Inventory current pipelines, scripts, and manual data flows.

- Identify single points of failure and high-risk reports.

- Stabilize critical jobs with basic testing and monitoring.

Phase 2 – Standardize and Deploy

- Define pipeline patterns for ingestion and transformation.

- Implement orchestration, error handling, and data contracts.

- Roll out curated datasets for priority domains like finance and sales.

Phase 3 – Scale and Optimize

- Extend coverage to new systems and use cases.

- Optimize job schedules, compute usage, and storage layouts.

- Provide robust feeds for BI, analytics, and ML with clear SLAs.

Rudder Analytics positions data pipelines as operational infrastructure.

- Reduce dependency on fragile scripts and hero culture.

- Lower the chance of bad-data events reaching executives, investors, or regulators.

- Deliver predictable data latency so reviews and forecasts run on time.

- Provide a durable foundation for BI and AI that respects SME budget and complexity constraints.

If leadership already depends on data for revenue, cost, and risk decisions, pipeline reliability is not optional.

Treat Data Pipelines as Critical Infrastructure

Revenue, margin, and risk decisions already run on your pipelines. Rudder Analytics engineers data pipelines that behave like an operated system, not a collection of scripts.