Make Analysis a System,

Not Heroic Effort

Standardise models, methods, and outputs so analysis drives decisions, not ad-hoc decks.

date_trunc('month', event_time),

sum(revenue) as mrr,

count(distinct user_id) as active_users

FROM prod.transactions

WHERE status = 'complete'

GROUP BY 1

Monthly Revenue Retention

When “Analysis” Is Just Reporting With Extra Steps

Most teams call it analysis when they:

- Export from a BI tool into Excel and build yet another pivot.

- Recreate the same metrics in different tools and get different answers.

- Run ad-hoc queries that nobody can reproduce a week later.

- Build models on CSVs that never connect back to the warehouse.

The Rudder Analytics Engineered Layer

Result: more charts, same decisions. Higher risk when numbers hit the P&L.

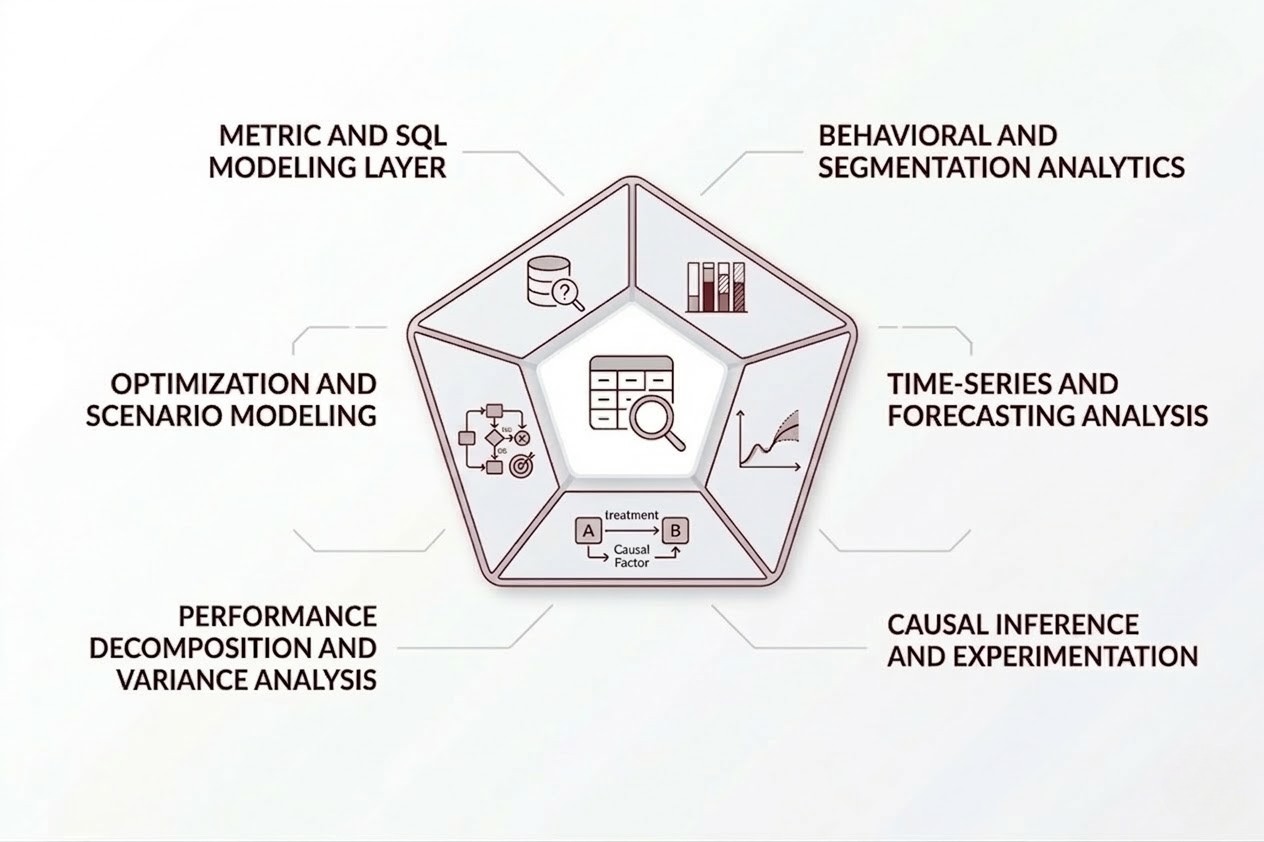

Rudder Analytics treats data analysis as an engineered layer on top of your warehouse and pipelines, not as one-off work.

What Data Analysis Means in Practice at Rudder Analytics

Data analysis is not “dashboards” and not just “ML”. It is a stack:

Well-defined metric layer

SQL/dbt models for revenue, cost, margin, churn, unit economics.

Statistical backbone

Hypothesis tests, time-series models, and uncertainty intervals, not guesswork.

Cohorts and segments

Repeatable group definitions for customers, products, and events.

Causal structure

Controlled experiments and quasi-experiments where A/B is not possible.

Decision models

Optimization and scenario simulations tied to business constraints.

Business effect

Faster, higher-confidence decisions on revenue, cost, risk, and speed.

Analysis Patterns We Actually Deploy

Metric and SQL Modeling Layer

Technical Focus

-

Design metric definitions (MRR, ARR, CAC, LTV, OTIF, OEE, etc.) as SQL/dbt models.

-

Implement SCD logic, cohort tables, and event schemas that match real behavior.

-

Apply consistent join keys and hierarchies to avoid silent double-counting.

Business effect

One version of each metric across teams; less time reconciling, more time deciding.

select * from {{ ref('fct_revenue') }} where status = 'active'

Behavioral and Segmentation Analytics

Technical Focus

-

Build customer, account, and product embeddings or feature sets.

-

Use clustering, RFM, and survival models to classify segments.

-

Standardize segment labels in the warehouse for reuse in BI and campaigns.

Business effect

Targeted sales and marketing decisions that raise revenue without raising CAC.

Time-Series and Forecasting Analysis

Technical Focus

-

Apply ARIMA, Prophet-like, or hierarchical models on demand, revenue, or traffic.

-

Decompose seasonality, trend, and event-driven spikes.

-

Backtest models and track forecast error over time as a warehouse table.

Business effect

Better inventory, staffing, or capacity decisions; fewer expensive surprises.

Causal Inference and Experimentation

Technical Focus

-

Design A/B and multivariate tests with clear power and sample-size calculations.

-

Implement CUPED or similar variance reduction where appropriate.

-

When RCTs are impossible, use diff-in-diff, matching, or synthetic control methods.

Business effect

Decisions on pricing, features, or campaigns based on incremental impact, not correlation.

Performance Decomposition and Variance Analysis

Technical Focus

- Decompose performance shifts into mix, price, volume, and efficiency components.

- Build variance bridges in SQL and Python, with reusable templates.

- Write results back to the warehouse as structured tables for BI.

Business effect

Fast, defensible explanations for revenue or cost swings in reviews.

Optimization and Scenario Modeling

Technical Focus

- Implement optimization models (LP/MIP/heuristics) for pricing, inventory, routing, or staffing.

- Run scenario grids and sensitivity analyses against key constraints and assumptions.

- Expose outputs via tables and APIs, not just notebooks.

Business effect

Improved margin and service levels for the same or lower cost base.

Engineering the Analysis Environment, Not Just the Outputs

Analysis on Top of the Warehouse, Not Outside It

- All analysis inputs pulled from the warehouse or lakehouse via SQL, not ad-hoc files.

- Intermediate results (cohorts, segments, model scores) stored back as tables.

- Notebooks and scripts depend on stable SQL models, not raw source tables.

Business effect: no more “that notebook used a different logic” discussions.

Integration With AI and ML

- Convert validated analysis patterns into ML-ready feature tables.

- Use RAG or LLM agents only on top of governed, warehouse-backed data.

- Persist model predictions and confidence intervals back into the warehouse.

Business effect: AI outputs that match finance and operational metrics instead of diverging.

Our Tech Stack

Key Tech Partners

Engagement Model for Data Analysis

Step 1 — Problem and Metric Frame

Clarify the decision: pricing, capacity, acquisition, retention, cost, or risk.

Define target metrics and constraints: revenue, cost, risk, and time-bound goals.

Audit current metric definitions and data sources.

Step 2 — Data and Model Design

Map which warehouse models and tables will support the analysis.

Define cohorts, time windows, and event schemas.

Choose methods: descriptive, diagnostic, predictive, causal, or optimization.

Step 3 — Build and Validate

Implement SQL/dbt models and Python analysis scripts.

Run statistical checks, backtests, and sensitivity analyses.

Review results with stakeholders and stress-test edge cases.

Step 4 — Embed and Operationalize

Write results into warehouse tables, not just slides.

Wire outputs into dashboards, workflows, or decision routines.

Document assumptions and usage rules.

Step 5 — Monitor and Iterate

Track metric stability and model performance over time.

Update logic when products, markets, or behaviors shift.

Maintain documentation and lineage.

Example Analysis Threads by Domain

Revenue and Sales

- Pipeline quality analysis, win-rate decomposition, and forecast error analysis.

- Price sensitivity analysis by segment, with tangible impact on revenue and margin.

Marketing and Product

- Multi-touch attribution analysis aligned with actual revenue, not platform claims.

- Engagement funnel and feature usage analysis tied to retention and ARPU.

Supply Chain and Operations

- Demand variability and forecast error analysis at SKU-location level.

- Capacity utilization and bottleneck analysis based on actual flow data.

Finance and Risk

- Variance analysis between budget, forecast, and actuals.

- Cohort profitability and risk analysis by product, region, or customer type.

Quality, Reproducibility, and Governance

Reproducible pipelines

SQL models and Python code live in version control.

Automated checks

Tests for metric consistency and model performance.

Lineage

From dashboard or notebook back to base tables and transformations.

Documentation

Metric and model specs maintained alongside the code.

Access controls

Sensitive data constrained by role and region.

Business effect: you can show how a number was produced, to whom, and when.

Treat Data Analysis as a Production Layer

Your revenue, cost, and risk decisions already rely on analyses. Rudder Analytics engineers data analysis as a governed, reproducible layer on top of your warehouse.